Recent Posts

- DPIA e FRIA nei sistemi di IA: cosa cambia e come integrarle

- NIS2 e gestione dei fornitori. Attenzione alla governance della fornitura

- Meaning of transparency and explainability of an AI system and legal consequences

- Determina ACN 9 febbraio 2026: tassonomia degli incidenti e obblighi di segnalazione NIS2

- Cybersicurezza e NIS2: scopri se sei un soggetto obbligato

Recent Comments

Meaning of transparency and explainability of an AI system and legal consequences

The need of a trustworthy AI

The recent popularity of AI systems (especially LLMs), AI agents and more generally AI based tools and applications has been leading to a massive use of such technology in all the corners of our social interactions. This includes any type of business, social networks, personal life and so on.

Besides questions regarding the political power obtained by the developers of such systems, major attention is directed towards the risks related to the use of AI systems and the consequent incidents.

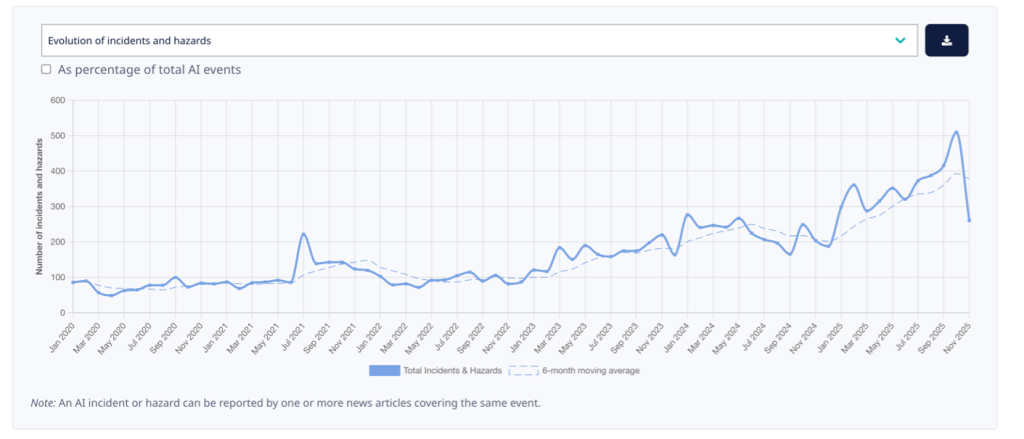

If you are curious to dive deeper into the typical risks, incidents and hazards related to the use of AI systems, you can check the MIT Ai risk repository or the OECD page dedicated to AI incidents and hazards monitor.

The latter shows quite clearly how the number of AI related incidents is increasing rapidly with the spiking use of AI systems:

In order to ensure that such risks are contained during the development, training and use of AI systems, several actors globally have developed risks frameworks and principles. The designed measures and principles are aimed at stimulating the use of a “trustworthy AI”: this term refers to “characteristics which help relevant stakeholders understand whether the AI system meets their expectations” (see ISO/IEC 22989:2022(E)).

For AI systems to be considered trustworthy, they often need to satisfy a range of criteria that matter to different stakeholders. While these criteria may sometimes influence each other and lead to trade-offs, strengthening the AI trustworthiness in general helps mitigate adverse risks.

Typically, the characteristics of trustworthiness are tightly intertwined with social and organizational practices; the data used to train and operate AI systems; the choice of models and algorithms; the design and governance decisions made by developers; and the ways humans contribute expertise, oversight, and accountability during deployment.

Human judgment is essential when selecting the metrics used to evaluate these characteristics and when setting the specific threshold values those metrics must meet.

Principles of a trustworthy AI

While different AI risk frameworks can value differently the characteristics of a trustworthy AI (e.g. giving more or less weight to privacy), there is a general coherence among the globally published documents and guidelines.

Typical principles of a trustworthy AI include (i) fairness, (ii) safety, (iii) privacy and security, (iv) transparency, (v) explainability, (vi) accountability.

The concept of “transparency”

The term “transparency” has a broad nature, and it implies a certain flexibility, as its meaning may vary according to the context. Generally, it involves communicating appropriate information about the AI system to stakeholders. This may include, for example, explaining how the system works, the details of the maintenance of technical and nontechnical documentation across the AI life cycle, the goals and limitations, design choices, models and so on.

Together with the information strictly regarding the system, transparency duties and best practices include also informing the stakeholder in relation to the data used in the development (e.g. training, validation and testing data).

It is important to notice that transparency typically does not mean a duty to disclosure of the source or other proprietary code or proprietary datasets but rather enabling people to understand how an AI system is developed, trained, deployed and works in certain uses or environments.

The concept of “explainable”

The idea of explainable differs from the concept of transparency. In particular, it refers to explain the users how the AI system produces a certain output or takes a specific decision. This means giving clear and accessible information to stakeholders so that they can understand what elements led to a certain outcome and how people (negatively) affected by the outcome can challenge it.

Transparency and explainability are both elements that enable a more trustworthy AI but they are not synonyms. While transparency relates to describing the system (both technical and non-technical aspects), explainability specifically relates to describing how the system goes from input to output and what factors influence the outcome.

Consequently, a system may also be transparent but not explainable or viceversa.

What to do in practice

Providers operating in Europe or with European customers are going to be bound to the principles of transparency and explainability by hard law, namely the AI Act. This regulation imposes a duty to design and develop high-risk AI systems “in such a way as to ensure that their operation is sufficiently transparent to enable deployers to interpret a system’s output and use it appropriately”. In particular, under Art. 13 (3) of the AI Act such high-risk AI systems shall be accompanied by a set of instructions which provide a wide range of information to the deployers regarding the provider, the system itself and the data (training, validation and testing), as well as information to enable deployers to interpret the output.

Similarly, Art. 50 of the AI Act imposes certain transparency obligations to providers and deployers of certain AI systems that expose the public to particular risks. For example, providers of AI systems that interact directly with the public or deployers of AI system that generates or manipulates image, audio or video content constituting a deep fake shall inform the public that its interacting with an AI system or with content AI generated.

For companies it is fundamental to be able to be able to show and explain the AI system, the data it uses, how it works and how it reaches outcomes. This is typically done via documentation and easy-to-understand explanations (i.e. documents, diagrams and so on).

Comments are closed.